This site uses cookies. By continuing to browse the site you are agreeing to our use of cookies Find out more here

Eve-Sense Announces the Release of New Game “Little Pet...

Eve-Sense Inc. (Headquarters: Sumida, Tokyo; CEO: Kosuke Shimizu, hereinafter "our company") is proud to announce the launch of Li...

TUFF Unveils "The Mystery of the Green Menace" Just in ...

TUFF Unveils "The Mystery of The Green Menace" Just in Time for Christmas Indianapolis, IN, November 18, 2023 – TUFF (Teenagers Un...

Television Personality and Speaker Angie Mizzell Pens N...

Former TV journalist and news anchor Angie Mizzell will release her debut memoir, Girl in the Spotlight, on October 3, 2023, publi...

Gift Card Pro – World’s First Book that Spills the Bean...

FOR IMMEDIATE RELEASELONG BEACH, CA — In a generous move to help consumers unlock the full potential of their gift cards, Michael ...

Best VPN for Low Latency

Best VPN for Low Latency connectivity is ongoing, and with the right VPN in hand, you can navigate the digital landscape seamlessl...

Online News Distribution Service with PR Wires Boost? V...

Learn how online news distribution services, paired with PR wires, can elevate your brand's visibility and reach in the digital la...

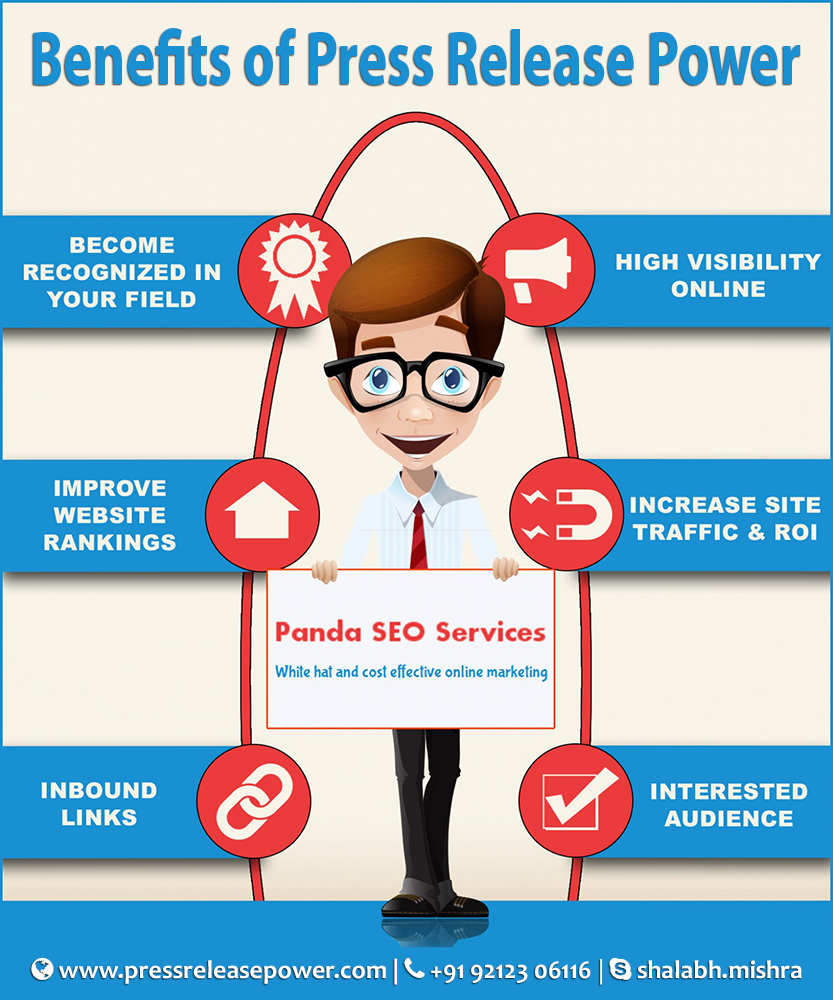

The Ultimate Guide to Best Press Release Distribution

Discover the ultimate tips for press release distribution in this comprehensive guide. Learn how to maximize your reach and impact...

The Power of Best Press Release Distribution

The power of press release distribution and maximize your reach. Learn how to leverage the best practices for effective disseminat...

Best Press Release Distribution Tactics Revealed

The best press release distribution tactics. Learn how to effectively distribute your press releases online for maximum exposure a...

How Best Press Release Distribution Boosts Brand Visibi...

Learn how press release distribution boosts brand visibility. Discover top tips for effective distribution strategies.

The Importance of Best Press Release Distribution

Discover the significance of press release distribution and why it's crucial for your business. Learn how to maximize its impact t...